A week or two ago, I had a meeting with some colleagues to discuss future needs for Project Dasher. One of these requirements related to an exhibit where we're going to want to ignore all the 3D visualization of sensor data, and focus on the 2D case (I.e. where we shade a 2D floorplan with the sensor values).

When this came up I thought to myself "oh, this could be a good driver for us implementing a 2D workflow inside Dasher", something I've chewed on in the past. This time around, I started off thinking about whether to use a 2D floorplan published via Revit through Forge, but decided to use an existing mechanism where we determine an approximate floorplan based on room volumes. (Part of the reasoning was flexibility – we want to be able to uses these graphics elsewhere, perhaps without the Forge viewer – but also because we don't necessarily want to force people to generate 2D sheets: if we can determine a rough floorplan from existing information, then there's real value in that.)

As a basis for this, I took two existing features inside Dasher (both of which were implemented primarily by my good friend and former colleague, Simon Breslav): one was the "room reference image", where we generate a reference image encoding the positions of rooms on a particular level:

This image (or rather these images, as we have one per level) is usually just held in memory: we perform a look-up when trying to find the room containing the current 3D location, which is especially useful when navigating a model in first person mode. (We have another reference image that helps us work out whether the cursor is currently over a sensor dot, at which point we can display a tooltip.)

Needless to say – for an image that's usually not displayed – the above screenshot has been taken while we're displaying the room reference image for debugging purposes.

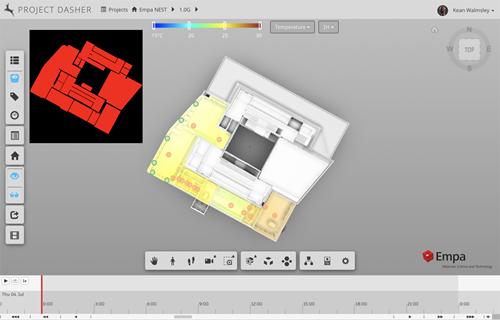

The basic approach where we input room volumes – and use these with a vertically-located orthogonal camera to generate an image – is extremely relevant to generating a 2D heatmap. The other piece of the puzzle was to be able to take the custom shader we use to generate our "surface shading" effects and use that – once again – with an orthogonal camera to create a 2D view. Luckily this "just worked" for 2D, which was a pleasant surprise, to say the least.

This feature has now been implemented in Dasher, and you can turn it on for yourself via the settings dialog:

The size of the heatmap can be set in the project settings, for now: this isn't ideal, so I'm exploring different ways of making this user-resizable.

The heatmap animates nicely alongside the 3D version, which is really cool. Here's an animation of a larger version of the heatmap – this time showing CO2 values – along with the 3D view:

This feature is very much experimental, in the sense it's really about enabling a particular exhibit that will probably need a 2D view of sensor data, but I do think it's got some real potential. In the next post (on this topic, anyway), I'll take a look at some options for allowing the user to position and resize these 2D heatmaps.

Leave a Reply