I've been a little slow getting my AU material together, this year (I've been called onto more pressing issues fairly consistently over the last weeks/months), so I'm very much up against Monday's content submission deadline. I'll certainly have the handouts ready, but the presentations will have to come later.

As I've done in the past, I like to post my handouts here for people to take a look at (and provide feedback on in time for me to correct prior to the event ;-).

Today's post is the handout for my AutoCAD user-focused class, "AC427-4 - Point Clouds on a Shoestring". This is the last of my classes at this year's conference, but the first I wanted to have my handout completed for (the others are actually somewhat more straightforward, as they focus on a) our Plugins of the Month and b) the implementation of the BrowsePhotosynth application, two topics with which I'm intimately familiar :-). This class currently has 123 people registered (vs. 61 for the implementation-centric class and an unknown number for the virtual class), so it should be a good crowd. Oh, and I'm working on getting some goodies for at least some of the attendees, so do sign up, if you haven't already. 🙂

"Any sufficiently advanced technology is indistinguishable from magic."

– Arthur C. Clarke

Introduction

Point clouds have historically been generated by expensive laser scanning equipment. These devices are non-contact – as the object being scanned is not physically touched - and active in nature, as light is emitted by the laser rangefinder and the time for the reflected beam to return is measured, yielding the precise distance from the device (at least in the case of time-of-flight devices – there are scanners that rely on triangulation to determine topology, using a camera to detect the location of a laser probing the surface of the object(s) to be captured). Time-of-flight devices often use mirrors to redirect the laser, reducing the need for the laser to move around. The reflected beams are ultimately directional in nature: you can't capture the back of an object without moving the object or the scanner and performing an additional scan, for instance.

Such devices still tend to be quite expensive: even newer models, which are driving the price into 10s - rather than 100s – of thousands of US dollars, still considered costly for many purposes.

There are passive alternatives to using active capture technology: devices that employ two cameras to take stereoscopic images, devices that use a single camera but use varying light conditions and even systems that depend on analysing the shadows cast by objects from different directions. These devices still rely, however, on specialist equipment, often making them costly and impractical (although the world is changing quickly with the introduction of consumer-focused devices such as Kinect for Xbox 360, which captures depth by projecting infrared dots across your living room).

The most interesting category of technique that's currently emerging (and I'm sure some would argue it has very clearly emerged) is that employing computer vision or photogrammetry to build a point cloud from a simple set of photographs or stills from a video.

There are a number of freely-available tools that provide this kind of capability, including Autodesk's Project Photofly (accessible via Photo Scene Editor), Microsoft Photosynth, and open source tools such as Bundler and its counterpart PMVS2.

The approaches used by these tools are fundamentally fairly similar and so the description of the underlying techniques and the advice given in this document on how best to take advantage of them should apply to them all. That said, they're ultimately targeted at different markets (whether consumers, hobbyists or design professionals), so their usability and usefulness is likely to vary depending on the task, especially as their respective feature-sets evolve over time.

The Magic

These various technologies currently depend on the user providing a set of photographs (and at some point they will support video, too, if they do not already) for analysis. When a web service is used – such as with Photofly or Photosynth – it's common for some work to be done before uploading, whether to check whether the image already exists on the server (and so avoid the unnecessary upload and processing) or to reduce the size of the image to avoid unnecessary network usage and server-side storage.

There are three major phases of the analysis process: feature detection/extraction, feature matching and 3D reconstruction.

The features detected in the first phase include various types of "interest points", edges, corners, blobs and ridges. A variety of algorithms are likely to be used to detect features.

Matching is performed across photos to determine the relative location of the images and – in due course – the position of the camera when each image was taken.

3D reconstruction can take place once features are matched across images: if a feature occurs in 3+ images it can usually be placed accurately in space and will contribute points to the cloud.

A Quick Tour of Photosynth

With this example we're going to focus on Photosynth. This tool is ultimately a consumer-focused, crowdsourced imagery site, but has been used effectively by people wanting to perform 3D reconstructions. Do take a look at Photo Scene Editor on Labs to see what Autodesk is doing in this space: over time I'd expect this technology to evolve into something that is ultimately better suited to design professionals wanting to capture some small section of reality. 🙂

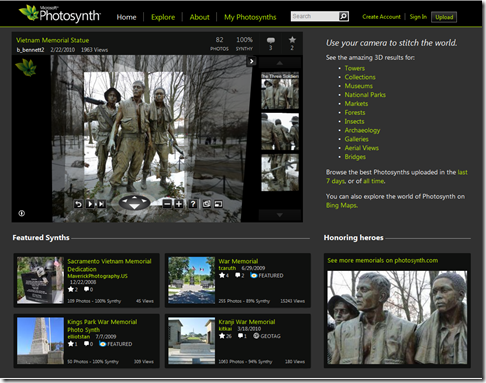

Photosynth is interesting because of the sheer wealth of content available. Browsing to the Photosynth website shows a host of detailed and often fascinating material:

The Photosynth site currently supports two types of content:

- Photosynths – 3D collections of 2D images, easily spotted by their Synthy value

- Note: Photosynth's synthiness rating indicates the proportion of images in the set that have contributed points to the cloud: it does not say how many points have been contributed

- Panoramas – 2D images stitched together, easily spotted by their mega/gigapixel value

We're really interested in 3D, of course, so we'll limit our discussions to Photosynths.

Let's take a closer look at the "synth" that happens to be highlighted on the main page: a set of images uploaded of a Vietnam Memorial Statue. Statues are often very good targets for capturing via photographs: as long as they have plenty of detail and are not too reflective.

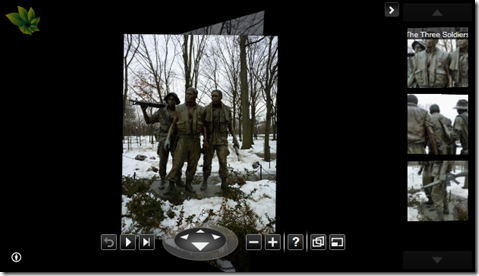

You can look at individual images in the photo by using the various navigation controls at the bottom and the thumbnails on the right. We're interested in seeing the quality of the point cloud behind this set of images:

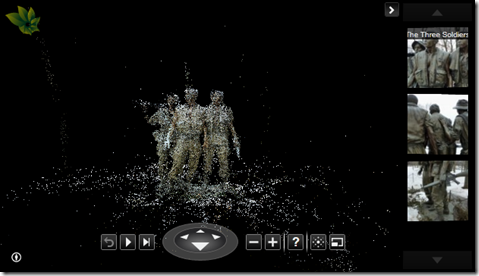

Once we select the "Point Cloud" item shown by hovering over the "current view" icon that's second from the right in the bottom panel, we will see our Point Cloud view:

Once we select the "Point Cloud" item shown by hovering over the "current view" icon that's second from the right in the bottom panel, we will see our Point Cloud view:

Bringing a Photosynth Point Cloud into AutoCAD

The point cloud format used on the Photosynth servers was originally decoded by binary millenium and later shaped into a more complete exporter by Christoph Hausner. The code I've implemented in the BrowsePhotosynth application to bring down the data from Photosynth is based on both approaches (as I was not aware of Christoph's work when I started). If you want more complete access to the data stored in Photosynth, Christoph's exporter is certainly worth a look. For the sake of simplicity the BrowsePhotosynth application only brings down the primary point cloud, but for large models there are often additional – typically smaller – clouds available that may contain interesting information. But as they are are disconnected from the main one (and are hence separate clouds) it does really make sense to try to overlay them without some user-involvement (out of scope for a simple tool such as this).

Let's now install and use the "BrowsePhotosynth for AutoCAD" application.

Start by navigating across to the ADN Plugin of the Month catalog on Autodesk Labs. Look for the BrowsePhotosynth plugin from October 2010.

You will need to be signed in to download the plugin (it's free to register on Autodesk Labs). Once you've agreed to the terms & conditions, you'll be able to download the ZIP to your local hard-drive:

You will need to be signed in to download the plugin (it's free to register on Autodesk Labs). Once you've agreed to the terms & conditions, you'll be able to download the ZIP to your local hard-drive:

The ZIP contains an installer (which can be run directly from the MSI or via the Setup.exe file) and a ReadMe.txt file.

The ZIP contains an installer (which can be run directly from the MSI or via the Setup.exe file) and a ReadMe.txt file.

Installing the application enables users of AutoCAD 2011 (or higher, and this includes AutoCAD-based verticals) to use the BROWSEPS command to browse and download point clouds from Photosynth.

Typing BROWSEPS launches our browsing dialog inside AutoCAD (you may notice below that I've manually added a "BrowsePS" item to the Point Cloud ribbon inside AutoCAD using the CUI editor – this is not currently added by the installer):

On the left of the dialog we have an embedded web-browser containing the main Photosynth web-site. On the right we have a list that gets populated with the point clouds detected as you navigate through the site on the left. When you feel like importing a point cloud, just click on it in the list on the right and it will be imported into AutoCAD.

On the left of the dialog we have an embedded web-browser containing the main Photosynth web-site. On the right we have a list that gets populated with the point clouds detected as you navigate through the site on the left. When you feel like importing a point cloud, just click on it in the list on the right and it will be imported into AutoCAD.

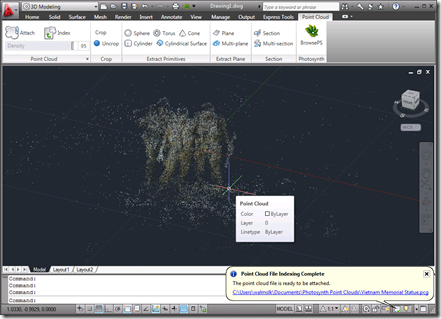

Here's the text we see on the command line as the point cloud gets downloaded and processed:

Command: _.BROWSEPS

Command: _.IMPORTPS Enter URL of first Photosynth point cloud:

"http://mslabs-086.vo.llnwd.net/d2/photosynth/m6/collections/07/99/db/0799dbca-0

8a8-4bb2-bdce-b00db6069828.synth_files/points_0_0.bin" Enter name of Photosynth

point cloud: "Vietnam Memorial Statue"

Accessing Photosynth web service to get information on "Vietnam Memorial

Statue" point cloud(s)...

1 point cloud found. This point cloud (containing 10 files) will now be

imported.

Processed points_0_4.bin containing 5000 points.

Processed points_0_1.bin containing 5000 points.

Processed points_0_2.bin containing 5000 points.

Processed points_0_0.bin containing 5000 points.

Processed points_0_3.bin containing 5000 points.

Processed points_0_9.bin containing 1293 points.

Processed points_0_6.bin containing 5000 points.

Processed points_0_7.bin containing 5000 points.

Processed points_0_5.bin containing 5000 points.

Processed points_0_8.bin containing 5000 points.

Imported 46293 points from 10 files in 00:00:01.6646656.

Creating a LAS from the downloaded points.

Indexing the LAS and attaching the PCG.

Command: _.POINTCLOUDINDEX

Path to data file to index:

C:/Users/walmslk/AppData/Local/Temp/tmp9CB2.tmp/points.las Path to indexed

point cloud file to create

<C:\Users\walmslk\AppData\Local\Temp\tmp9CB2.tmp\points.pcg>:

C:/Users/walmslk/Documents/Photosynth Point Clouds/Vietnam Memorial Statue.pcg

Converting C:\Users\walmslk\AppData\Local\Temp\tmp9CB2.tmp\points.las to

C:\Users\walmslk\Documents\Photosynth Point Clouds\Vietnam Memorial Statue.pcg

in the background.

Command:

Command: Enter path to PCG: Enter path to LAS:

Waiting for PCG creation to complete...

File inaccessible 9933 times.

Command: _.-POINTCLOUDATTACH

Path to point cloud file to attach: C:/Users/walmslk/Documents/Photosynth Point

Clouds/Vietnam Memorial Statue.pcg

Specify insertion point <0,0>:0,0

Current insertion point: X = 0.0000, Y = 0.0000, Z = 0.0000

Specify scale factor <1>:1

Current scale factor: 1.000000

Specify rotation angle <0>:0

Current rotate angle: 0

1 point cloud attached

Command:

Command:

Enter an option [set Current/Saveas/Rename/Delete/?]:

Enter an option

[2dwireframe/Wireframe/Hidden/Realistic/Conceptual/Shaded/shaded with

Edges/shades of Gray/SKetchy/X-ray/Other] <2dwireframe>:

Command:

And here is the point cloud inside the AutoCAD editor:

[Much of the below advice has been borrowed from the Photosynth Photography Guide and the Autodesk Labs tutorial on creating 3D models from Photographs.]

The results you get out of the various systems will depend a lot on the photographs you've taken. Here are some guidelines and "rules of thumb" to help you maximise the number of images from your set that will contribute points to your cloud.

- The Rule of Three

- Each part of the scene should appear in three images taken from different locations, to aid with its placement in 3D space

- Take photos from multiple viewpoints

- If you stay in the same spot, you'll end up with little more than a panorama

- But change the viewpoint progressively

- Keeping to an angle of about 10-15 degrees (out of 360) helps a lot: larger gaps in the angle makes matching harder

- Lots of overlap

- Aim to overlap images by about 50%

- Lots of wide shots

- Close-ups are important for detail, but plenty of wide-angled shots will really help the reconstruction process

- Avoid foreground objects

- It's really better to have clear shots of the subject, where possible

- Focus

- It probably goes without saying, but sharp images are better than blurred ones

Here is some other advice related to image editing:

- Rotate images appropriately before uploading

- Having the images oriented upwards helps

- Don't crop

- You can edit/retouch/recolor, but don't crop

- Avoid watermarking, etc.

- Having repeated copyright notices, for instance, will cause issues

- No need to resize images

- That said, Photosynth resizes down to 2 megapixel and Photofly to 3 megapixels: more than that does not contribute to the point cloud (although it may benefit 2D viewing)

Now for the choice of subject.

Things that this technology handles well…

- Natural, visual textures

- Textures that have a lot of visual detail but are low on repetition are good

- Lots of unique details

- Visual variety is a very good thing

Things that this technology struggles with…

- Uniformity

- Uniform areas, 2D edges and corners get ignored

- Repetition

- Repeated details and textures are confusing

- Shininess

- Reflective surfaces are difficult and you can forget mirrors

- Transparency

- Glass is definitely problematic to capture

Creating a Synth

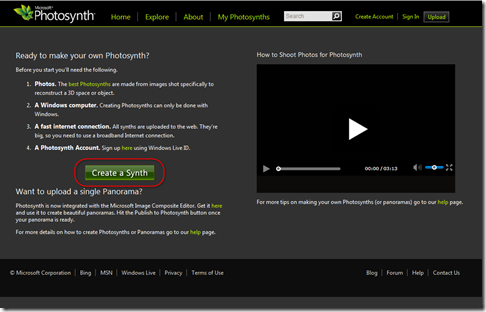

To get started creating your first synth, you will need to sign in using a Microsoft Live account (I just use my Hotmail account) to keep track of your synths. Now you should be able to select "Create Synth" from the "Upload" page:

Photosynth's user interface for creating a new synth is fairly straightforward. Much of the additional information can be modified later – the photos are the really important thing, at this stage.

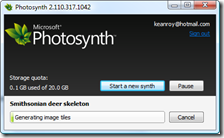

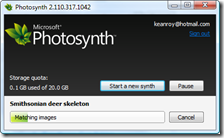

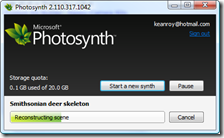

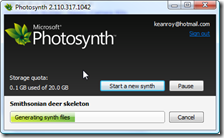

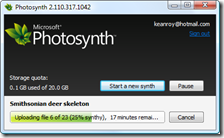

Once you've selected the images to upload and specified the other data, the Synth process can start:

Once you've selected the images to upload and specified the other data, the Synth process can start:

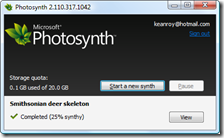

From the time the synth is available to view – assuming you have made it public – you should be able to find and download it using the BrowsePhotosynth for AutoCAD plugin.

Leave a Reply